‘I’d simply been absorbing no matter ChatGPT advised me’: My experiment following the Iran warfare revealed a much bigger drawback with AI

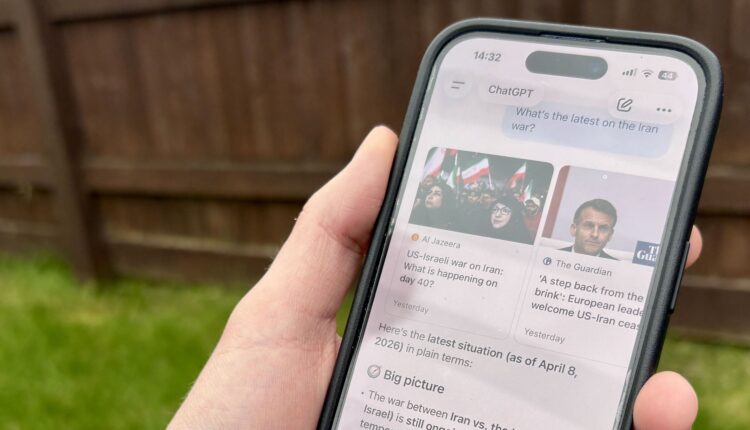

A few weeks ago I started deliberately using ChatGPT to follow the latest news about the Iran war. It was partly a test to see how chatbots compare to traditional news sites at presenting real-time information, and partly because the pace of the news at the time was overwhelming.

But at some point I noticed I hadn’t clicked through to verify a single thing. I’d just been absorbing whatever ChatGPT told me.

Article continues below

Tracking the change

Using AI for search hasn’t always been a good idea. Not all that long ago, ChatGPT didn’t have access to real-time information. Google’s AI Overviews was recommending people add glue to pizza to help the cheese stick and suggesting eating a rock a day. The problems with relying on AI for real-time, accurate information were obvious and easy to spot.

But a lot has improved in the past year. Models are now more accurate, information is more up to date (with many chatbots now accessing the internet in real-time) and sources are more likely to be cited.

AI search has shifted into what Ofcom recently called “answer engines” — tools that don’t just point us towards information, but provide it directly, in plain conversational language.

All of this sounds good, and in many ways it is. I believe that for low stakes, quick queries, like a recipe, a definition, a travel tip or buying advice, then AI search can be genuinely useful. And that conversational format also helps you drill down, answer the right follow-up questions and find what you need faster than clicking through a list of links.

But I also think that this improvement in itself is a problem.

The case against better answers

When AI search was obviously more flawed, many of us stayed alert. Now that it’s better and more reliable, I worry that we’re less likely to question it. And the conversational format plays a pretty major role in that.

We’re wired to treat fluent, coherent language as credible. When something reads like a confident explanation, it’s much harder for us to step back and interrogate it — even when we know we should. I’ve written about this same pattern across other areas of AI: in therapy, in relationships, in health advice. It’s very easy to offload our thinking to whatever AI tool we’re using and become way less likely to apply our own judgement.

Ellen Scott, who I spoke to about this in a work context, called it smoothout — a kind of cognitive offloading where the effort of evaluating information gets absorbed by AI. It removes the friction that used to make you think.

Traditional search wasn’t perfect, but it had that friction built in. You’d typically scan a list of links, look at the sources and make quick judgements about credibility. It was active, even when it felt automatic. AI search replaces all of that with a single synthesized answer delivered in a conversational (and sometimes sycophantic) tone. Which means you’re sitting back and receiving information rather than evaluating it.

We know from Pew Research that when an AI summary appears in search results, people are significantly less likely to click through to original sources. So, AI is effectively answering your question and reducing the likelihood that you’ll check it.

The failures that remain

Of course, AI search still isn’t perfectly reliable every time either.

Hallucinations — where a chatbot confidently generates something that isn’t true — haven’t gone away. Citations are also still sometimes misleading or broken.

And there’s another problem: sycophancy. Even though this is something AI companies are actively addressing, we know that AI systems still have a tendency to agree with you. This is often because these systems are optimized to feel like a good and natural conversation, but not necessarily to tell you the truth.

What makes this worse is that the improvements in accuracy make the remaining errors harder to spot. When a tool is obviously unreliable, I think we stay more critical of it. But when it’s mostly right, I worry we stop checking, just like I did in my own experiment.

Building better systems and better judgement

The standard answer here is that people need better media literacy for the AI age, which I believe they do. Understanding what these systems are doing, treating AI outputs as a starting point rather than a conclusion, learning to question fluent confident language, all of that is incredibly important.

But the times we’re most likely to reach for AI search — during fast-moving situations, when we need answers to high-stakes questions, in emotionally overwhelming events — are exactly the times when verification matters most and critical thinking is hardest.

In previous reporting I’ve spoken to therapists and doctors who’ve noticed the same pattern that patients often turn to AI during moments of crisis or distress, precisely when they’re least likely to scrutinize what they’re being told. That’s why the burden can’t sit entirely with users.

If AI tools are going to sit at the center of how people find information, their design choices matter enormously. That has to mean clear attribution, interfaces that prompt you to check and follow through in finding more information from other sources, tools that show you what they didn’t include, not just what they did.

AI search has gotten better, there’s no doubt about it. I just think we need to be honest about what better actually means for how we find, process and understand information in the long-run.

Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds. Make sure to click the Follow button!

And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form, and get regular updates from us on WhatsApp too.

The best business laptops for all budgets